Introduction

Hello! I’m Rachel Brindle. I maintain this digital garden (or knowledge repository) here. Amongst other things, I’m a pilot, I’m building my own (electric) airplane, I’m a software engineer by trade, and I help maintain the Quick & Nimble testing frameworks for iOS.

This is inspired by Nikita’s knowledge repository.

The basic idea is, as I come across information - or otherwise generate my own - I write it down in here. This is meant to provide a single, searchable database for things I know. So that I don’t have to keep searching how I did something previously. Additionally, this serves to help reinforce things I’ve learned, both by forcing myself to write down things I learn, as well as write it in a way that should help my future self not have to spend so long figuring out how I did a thing.

No content on this site is generated using AI. I hate that I have to put that now.

Using this Site

Pages are organized on the sidebar to the left. It’s sorted alphabetically, and most top-level sections have nested subsections. Sections with the chevron next to them can be expanded to show additional subsections. You can also search for things by clicking or tapping on the search icon in the top left.

There is an rss feed to track updated articles here. You can also paste any article’s URL into your rss reader and it should discover the feed.

This uses mdbook as the underlying technology. Additionally, I have written a number of custom plugins (or post-processors, as mdbook calls them) to aid in writing this. They are described in meta/tooling.

Recently updated

The 10 most recently updated articles are:

- Dependency Injection (2024-03-06)

- Slow-Cooked Pinto Beans (2023-11-17)

- Composite Homebuilt Aircraft (2023-09-23)

- Vim (2023-09-21)

- Testing (2023-08-12)

- Async/Await (2023-08-12)

- Testing Async/Await Code (2023-08-12)

- To Don’t (2023-08-12)

- Antarctica (2022-12-17)

- Flying (2022-11-06)

Last updated: 2024-03-05 16:12:53 -0800

Antarctica

The seventh continent, at the bottom of the world.

Antarctica is the highest, driest, and most desolate place on Earth.

Jon Johanson’s RV-4 Flight to McMurdo

In 2003, Australian Jon Johanson had the great idea to fly his modified RV-4 down to McMurdo. He even overflew the south pole station, before turning back and landing at McMurdo. There he was stranded for a bit as McMurdo didn’t want to supply him with fuel. He eventually had fuel donated to him and he was able to fly out.

Writeup on Jon Johanson’s flight at SouthPoleStation.com

US Antarctic Program

The USAP manages the US-run stations and research vessels, such as McMurdo and the south pole station.

- USAP.

Working in Antarctica

Again, this is only relevant for US-based persons such as myself. You can work directly through the USAP in either a management position (not likely to deploy), or a scientist position (more likely to deploy). Additionally, the USAP contracts with several companies for support staff.

I find the idea of working in Antarctica intriguing, but I would not be willing to make the sacrifice required to do so. It would definitely be an adventure, but being cut off from the rest of the world for 6 months sounds like a bit too much.

South Pole Station

Highly interesting Wendover Productions video on Living at the South Pole.

Last updated: 2022-12-16 23:15:35 -0800

Antiracism

Resources, twitter threads and the like that have opened my eyes about the extent to which racism is a thing, and what to do about it.

Last updated: 2020-12-22 17:22:26 -0800

Astronomy

I love everything related to space, both human exploration of space, and the observation of objects in space.

Resources

Resources for learning astronomy.

Richard Pogge’s/OSU’s Astronomy podcasts

Richard Pogge is a professor at OSU. He recorded the lectures for a few of his “astronomy for non-astronomy majors” classes and posted them online as podcasts. They’re absolutely enrapturing to listen to. One of the things I love about these is that he also covers a lot of the history behind astronomy, in addition to what we currently know.

- AST 161, from Fall 2007, is an introduction to solar system astronomy. This is absolutely fascinating as in addition to things like the planets and the sun, he also talks about how humans developed astronomy, and how astronomy has influenced all sort of our culture. In addition to some of the modern things we’re learning, as we’ve sent spacecraft to objects in the solar system.

- AST 162, from Winter 2006, is an introduction to stars, galaxies, and cosmology. It covers a bit more of the science behind stars, as well as a lot of the modern history behind how we gained that knowledge, and how we know it’s correct.

- AST 141>, from Fall 2009, is an introduction to astrobiology. I haven’t listened to this one yet, but I’m very much looking forward to listening to it.

Last updated: 2021-04-12 12:26:52 -0700

Astrophotography

Taking pictures of the sky!

See Cafuego’s page on software for osx.

See also the Mac Observatory site.

I use the following software:

- AstroDSLR for capturing from my DSLR.

- Nebulosity for stacking images.

Theory and Books

The standard recommended reading is The Deep-sky Imaging Primer.

- A bunch of other useful links for beginners. Though, the whole cloudy nights forum is super useful.

- Other useful threads for beginning imaging on cloudy nights

- Jerry Lodriguss’s astrophotography techniques

Finding a site

Find a local dark sky site. In LA, I like Joshua Tree National Park. However, being able to easily access far-away dark sky sites is one of my primary reasons for learning to fly.

Equipment

You can get away at a bare minimum with just a camera and a tripod. My equipment checklist is:

- Camera

- 50 mm lens, because wide field shots are fun.

- Telescope1

- Telescope camera mount

- (barlow lens, T-ring, etc.)

- Bahtinov mask.

- Telescope camera mount

- Equatorial Mount

- Motors for said mount

- Batteries for the motors

- Computer (Strictly speaking, this isn’t necessary - my camera can be set to take a series of photos at once)

- USB-A to Mini-USB-A (to talk to camera).

- RED flashlight - white will ruin your night sight. You also want low-lumen, for the same reason.

- Water

- Coffee

- Snacks

- Camping chair

- Sleeping pad/bag (even if you plan to stay up all night, bring these).

- Pillow

- Paper and Pen.

- A book or something else to do while the computer does all the work.

Be sure to set the computer to “night shift” mode2 before it’s dark, as red as possible.

Go there, set up camp. Preferably be set up before dark.

Jerry’s list of beginner equipment for astrophotography, which is a potential source for expansion.

Actually Taking Photos

Regardless of how you use it, be sure to write down what you’re taking a photo of when you do it. Even if you know what the constellation/body you’re photographing is anyway.

Also, for stacking3 reasons, the more photos you take, the better it is, but it does have diminishing returns4.

Using a computer

Use AstroDSLR from computer to control the camera. Keep the camera in bulb mode to allow the software to control exposure time. Otherwise follow these instructions.

Make a different folder for each different set of photos you take.

Drift measurement with AstroDSLR

Copied from their website:

For polar alignment by the drift method or for the validation of the guiding you can use drift measurement helper panel.

The scale of the graph is adjusted automatically. Blue curve represents drift in X and red curve in Y direction. Blue value in lower left corner is drift per image in X direction and red value in lower right corner is drift per image in Y direction.

To use the panel for polar alignment, rotate camera (to align RA/Dec axes along X/Y directions), start preview in endless loop, select accordingly bright star and use drift method.

Please note, that the graph is cleared every time you select the star in the preview image.

Without a computer

Put the camera in manual mode, and have it set to average.

Star Trails

Sometimes you’re going for that really cool effect, othertimes you’re not.

Here’s an article from Jerry Lodriguss on how to deal with star trails.

PostProcessing

Nebulosity doesn’t read the color information from your raw files. Convert them to jpeg, because that’s still better than grayscale images.

for i in *.cr2; do sips -s format jpeg "$i" --out "${i%.*}.jpg"; done

From Nebulosity, open batch -> align and combine images. Select “Translation + Rotation + Scale”, click “OK”, and select the images to stack. Now, select the same star in each photograph as it prompts you. You’re going to go through the sets 3 times (so that it can correct for translation/rotation/scale). Now, do some manual editing, and save the end result.

Post to instagram5 or whatever. Use it as your new desktop background.

Much better advice on how to select one. Though, usually, the best one is the one you already own.

or use f.lux to remove as much blue from your screen as possible.

It’s essentially an inverse square relation - to get 5x better quality, you need to take 25x more images.

flume seems to be a decent OSX client for instagram. The pro version is worth it.

Last updated: 2020-01-10 11:49:17 -0800

Gallery

Sorted by date

Last updated: 2019-07-13 16:35:48 -0700

2019-07-12 Joshua Tree

Went out to Joshua Tree National Park to try out a new scope! I’m very pleased with the results.

Jupiter

One of the first things I photographed!

This combined from 200 separate images. Two sets of 100. The first to get details of Jupiter’s clouds (ISO 100, 1/200th second exposure, I could have shortened the exposure length even more to get better details). The second to get details of the 4 Galilean moons (ISO 100, 1/10th second exposure). I then stacked each set of 100 into their own image, and replaced the overexposed Jupiter in the picture with the moons with the much better image of Jupiter’s clouds. I think it worked out pretty well.

Andromeda Galaxy

I had to wait until about 1:30 AM for Andromeda to be sufficiently high in the sky to clear some ground obstructions (rocks)

This is composed of 100 images stacked together. 10 second exposure, ISO 2000 or so. (Any longer exposure time introduced noticeable star trails, as my mount wasn’t perfectly aligned).

Pleiades

This was the last picture I took - my camera’s battery died after taking the first of what would have been 100 images. This was taken at approximately 3 AM.

10 second exposure, forget the ISO speed.

Last updated: 2019-07-14 10:21:26 -0700

2019-08-31 Joshua Tree

First weekend following a new moon, let’s get some photos of the stars!

Unlike last time, I made sure to have both camera batteries fully charged. At some point, though, I need to figure out a way to power the things from either my car or possibly an ebike battery.

Orion Nebula

This was surprisingly easy to capture. This was done with a series of 100 images, ISO 3200, and a 15 second exposure. Stacked (more-or-less automatically), then processed in post to bring out that extra oomph. Cropped from the original to bring more attention to the nebula itself. This nebula is really bright.

California Nebula

The California Nebula (NGC 1499) is an emission nebula in the constellation Perseus.

This was quite difficult to capture. Because it’s so red, you can’t visually see the California Nebula. I could barely make it out on the highest ISO setting my camera can do (25600, wow) at a 20 second exposure. Couldn’t do any longer without introducing star trails. I’d love to revisit this once I have autoguiding figured out - or at least with a much better aligned telescope.

Postprocessed to bring out the red. as much as I could.

Last updated: 2019-09-08 20:29:20 -0700

Image Stacking

Image stacking is the process of combining multiple images of the same thing into a single image. This is done for multiple reasons - to increase dynamic range, to reduce the effects of noise, etc.

Theory

Keith Wiley has a really good article on the theory behind image stacking.

Last updated: 2019-06-05 14:54:06 -0700

Astro-Tech AT102ED Telescope

It’s a nice, relatively cheap refracting telescope.

Making the Bahtinov Mask

The outer diameter of the leading element is 122mm.

I have a bahtinov mask available here, it was generated from this svg, which itself was generated from this page. The relevant specs for that is it’s 102mm diameter lens, and it’s 714mm focal length.

This is also available on thingiverse

Focusing

With a Canon EOS 6D on a T-ring (1.25 inch), this focuses at just past 41mm.

With a Canon EOS 6D on a 2-inch T-Ring, this focuses at about 82.5mm.

Last updated: 2020-12-22 16:44:36 -0800

Celestron CG-4 Equatorial Mount

My notes on how to use this mount.

Setup is relatively simple, but much more involved than altazimuth mounts we might be used to.

Balancing

First, we balance in the right ascension, then in declination.

RA balancing is necessary for accurate tracking when using motor. It also eliminates undue stress on the mount.

DEC balancing is necessary to prevent sudden motions when the DEC clamp is released.

Right Ascension

- Release the RA clamp (the lower clutch), and position the telescope off to one side of the mount. The counterweight bar should be horizontal on the opposite side of the mount.

- Release your hold on the telescope - gradually - to see which way the telescope roles (to one direction or the other)

- Move the counterweight as necessary to balance the telescope (remains stationary when the RA clamp is released).

- Tighten locking screw to hold counterweights in place.

Declination

- Release the RA clamp and position the telescope off to one side of the mount - basically, same start as when balancing in RA.

- Lock the RA clamp to hold the telescope in place.

- Release the DEC clamp, and rotate the telescope until the telescope is parallel to the ground

- Gently release hold on the telescope to see which way it rotates. As with before, don’t let go entirely.

- Move the telescope on the mounting bracket in either direction until the telescope doesn’t move, as tested in part 4.

- Tighten the mounting bracket screws.

Polar Alignment

Now we get to the part of what makes equatorial mounts actually different than altazimuth mounts. This is necessary to track the stars correctly.

The goal is to place the telescope’s axis of rotation parallel to the Earth’s axis of rotation. This is done by moving the telescope vertically (altitude) and horizontally (azimuth), not in RA or DEC.

Note that the mount can really only be adjusted between 20 and 60 degrees.

There are a few ways to do this.

Latitude scale

This is the easiest way to align a telescope. It also can be done in daylight, because it only requires that you know which way is (true) north, and your latitude (degrees above the equator). This is also the least accurate, but it gets close enough for short exposure astrophotography.

- Make sure the polar axis of the mount is pointing due north.

- Level the tripod (there’s a bubble level built into the mount for this purpose)

- Adjust the mount in altitude until the latitude indicator points to your latitude.

Pointing at Polaris

This is conceptually simple. Polaris is less than a degree away from the celestial north pole, so you use Polaris as a stand-in for the celestial north pole. It’s about as accurate as the latitude scale method.

- Make sure the polar axis is pointing north.

- Loosen the DEC clutch nob and move the telescope so that the tube is parallel to the polar axis. When this is done, the declination setting circle will read +90 degrees. If the declination setting circle is not aligned, move the telescope so that the tube is parallel to the polar axis.

- Adjust the mount in altitude and/or azimuth until Polaris is in the field of view of the finder.

- Center Polaris using those same altitude/azimuth controls. Do not move the telescope in RA or DEC.

Declination Drift

This takes the longest amount of time, but produces the best results. In this, you’re looking at two stars to see how much they drift in declination over time, which tells you how out of alignment you are from the polar axis. Because this takes a while, you should first get a rough alignment (using either latitude scale or pointing roughly at a polar axis).

The idea here is to choose two bright stars - one near the eastern horizon and one due south near the meridian. Both should be near the celestial equator (0 declination).

For the southern star, choose one within half a degree of the meridian, and 5 degrees of the celestial equator. If the star drifts north, the polar axis is too far east. If it drifts south, the polar axis is too far west.

Once that star no longer drifts, we move on the the eastern star. This should be 20 degrees above the horizon and within 5 degrees of the celestial equator. If it drifts south, the polar axis is too low. If it drifts north, the polar axis is too low. Adjust the latitude scale to fix this.

Modifications

Modifying Motor Controller for Autoguiding

See this guide from Shoestring Astronomy.

Last updated: 2019-09-14 20:55:42 -0700

Bae

My bae is pretty great. As of this writing, she has a Masters in computer science, and is working on a PhD in robotics and electrical engineering. I have 3/4 of an undergrad computer science degree.

Talk Practices

She sometimes gives talks, and she likes to practice for me. Sometimes I take notes on them. I rarely actually understand what she’s talking about. But she’s cute nonetheless.

2019-07-17 Observability in Control Theory

She practiced a talk on control theory in front of me. These are my notes. The talk content might be wrong - she’s still learning about this.

E.G. Drone on top of a car, measuring the car.

It has a method to track the target (car). The way it does it is to measure the state of the target.

x(t+1) = A * x(t)

A is a transition matrix - it maps the target from the current state to the next (next state = current state * transition matrix)

Drone can measure the target’s “process” - it can estimate the next state of the target because it has the transition matrix encoded in it.

Let’s say drone also has some radar/camera sensors (other sensors).

Now, y(t) = C * x(t) - y is the drone’s measurement of x(t). C maps the current state to what the drone is observing.

This format is how we’d model dynamical system. Usually there’d be other terms for noise (B - process noise, D - measurement noise).

Given this system, the system is observable if given y(0), y(1), ..., y(l) if we can backtrack to a state x(0)

How to get from the measurements to the original state (get from y(0), y(1), ... to x(0)). So, we know from x(1) = A * x(0), and we know that x(2) = A * x(1)=A^2 * x(0). Therefore, x(l) = A^l * x(0)`

Similarly, y(0) = C * x(0) and y(1) = C * x(1) = C * A * x(0), and so forth: y(l) = C*A^l*x(0). This can then be rewritten as a system of linear equations, like so:

| y | observability matrix |

|---|---|

y(0) | C |

y(1) | CA |

y(2) | CA^2 |

| … | … |

y(l) | CA^l |

Can then be solved for x(0) if we have A and C. So, we could write this out if we have matrices A and C, but it’s a long matrix, so it’d be difficult to compute.

How do we know that this is observible if it’s computationally hard to get to a unique x(0)

So, this can be written as:

y\_bar = O * x(0) (O = observability matrix). If the rank(O) == n (rank = number of columns, n = number of states that target can be in), then the system is observable.

Doesn’t tell you how observable it is, or how much information you need in order to get to x(0) - it could be observable, but it might be infeasible to observe.

Measuring Observability

So, measuring observability:

Observability gramiam - different kind of matrix that is used to tell how observable a system is.

for all t from 0 to l, the normal of y(t) squared = energy of y = the observability gramiam. The higher, the more observable.

= sum from 0 to l of transposed(C * A ^ t * x(0)) * (C * A ^ t * x(0)) = G.

If we take determinant of G, and is high, then we have high energy in y, and it’s highly observable.

want to maximize the minimum eigenvalues of G, in order to have high observability.

These are all ways to say how observable a system is.

Usefulness

Why do this?

You can use this information to calculate how well a kalman filter works by calculating the observability gramiam.

You can determine how well you designed your system.

Last updated: 2021-11-27 22:07:29 -0800

Bicycling

Bicycling is the best form of ground transportation.

No, but seriously, here’s why bicycling is the best.

- Low cost of entry, and very low recurring costs. (You can get a decent hybrid for a few hundred dollars. Maintenance can be done by anyone, but even if you have a bike shop exclusively do your maintenance, it’s less than $100/year to maintain.)

- Keeps you fit & healthy. Yes, maintaining weight is entirely about your diet, but cycling helps you stay fit & healthy regardless of weight.

- Relatively speedy. Most people can easily maintain 10 mph on flat ground, with a little training you can easily double that.

- Incredibly nimble. Bikes take up very little space, and have very low mass making them easy to maneuver around obstacles.

- Extremely versatile. You can carry just about anything with a bike. Larger items require more planning, but you can certainly carry full sheets of plywood with a bicycle trailer.

- In a lot of urban environments, bicycling is the fastest mode of personal transport. You can filter through car traffic, don’t have to look for parking, and can take shortcuts. Even in the Venice, CA area, bicycling is faster than driving a car to destinations less 3 to 5 miles away.

Subpages

Links

Last updated: 2022-08-14 18:29:20 -0700

BBSXX Electric Bike Motor

One of the most popular electric bicycle conversion motors, the Bafang bbs02 is a 750 watt mid-drive motor and the Bafang bbsHD is a 1000 watt mid-drive motor. They are similar in design and are commonly referred to interchangeably as a BBSXX.

Installing

Notes while installing one of my bbs02 units

What you need:

- Pedal removal wrench

- A bafang install tool

- crank arm puller tool

- Long wrench. You can never have too much leverage.

- Grease

- Medium-grade thread locker

- A set of 1-10mm hex bolt drivers/wrenches

- Paper Towels

Steps:

- Remove the old bottom bracket

- Remove the pedals

- Remove the crank arms (you will want as long a wrench as possible for doing this)

- Remove the old bottom bracket (Again, a long wrench is a lifesaver, just for the extra torque you can apply on this)

- Remember to remove the non-drive side part first (loosens counter-clockwise - left turn)

- Remember that the drive-side part is reversed (loosens clockwise - right turn)

- Install the motor

- grease the inside of the bottom bracket shell (on the bike frame)

- Insert the motor into the bottom bracket shell.

- Install the lock ring mechanism. Use one of the “bafang install tools” you can find easily by googling. This is also one of the places you want to at least be close to the appropriate torque rating.

- At this point, the drive unit should be securely installed and shouldn’t move at all as you try to push down on it.

- Install the chain ring

- Install the cranks

- Install the pedals onto the crank arms

- Install the extra cabling.

Uninstalling

Notes I took while uninstalling one of my bbs02 units to move it to a different bike.

What you need:

-

Pedal removal wrench. The longer the wrench, the better (more torque!)

-

Grease

-

Medium-grade thread locker

-

Spline tool

-

Spanner wrench

-

Crank arm puller

-

A set of 1-10mm hex bolt drivers/wrenches.

-

Paper Towels

-

Remove the pedals (Using the pedal wrench)

-

Once the pedals are removed, remove the crank arms. Clean off the existing grease and set aside.

-

Remove the chainring. This uses 4mm hex bolts.

-

Remove the lock ring mechanism. This is the top splined nut, y-shaped thing, the lock-ring, and the washer underneath the lock-ring. Remove the nut using a spline tool, use a 5mm bolt driver to remove the bolts on the 5-shaped nut, and use a spanner to remove the lock-ring. The washer will come off. Set aside all of these close to each other.

-

At this point, the drive unit will slide out of the bottom bracket of the bicycle. Be sure to unplug all the cables that are connected to it first, of course.

-

Remove the cables remaining on the bicycle.

Last updated: 2022-08-14 18:29:20 -0700

Ebikes

Electric Bicycles, or ebikes, manage to have all of the benefits of a standard bicycle (very cheap to buy and own, nimble, versatile, oftentimes faster than driving), and they reduce or eliminate the effort required to actually get around. I am a huge fan and proponent of electric bicycles (and really, EVs of all kinds. Bring on the electric revolution).

Motors

There are basically 2 ways to mount the motors in an ebike nowadays: Hub, and Mid-Drive.

Hub motors are motors directly embedded into one of the wheels of the bike. Mid-drive motors are mounted such that they use the bike’s drivetrain to deliver power.

Hub Motors

Hub motors work by embedding the motor into the wheel. Which makes installing a hub motor onto an existing bike super easy - all you need to do is replace the wheel, mount the other electronics, and go.

In general, hub motors are thinner than mid-drives. They do, after all, have to fit inside of a bicycle wheel. And even fat tire bikes have relatively thin wheels. This generally means that they’ll have a larger diameter than a mid-drive motor of the same power rating, which gives them a larger surface area and improves heat dissipation.

Hub motors are also unsprung weight. On a bike without suspension, this doesn’t really mean anything, but on a bike with suspension, it reduces the effectiveness of the suspension, which both reduces traction on bumpy roads and makes the ride itself bumpier.

Hub motors, being independent of the system drive train, also offer redundancy. In the event that the drive train fails, then you can get home using only the motors.

All motors have specific most efficient and max rpms that they operate. Which for hub motors directly translates into a most efficient and max speeds. These are based on the voltage of your system, as well as the specific motor, and the diameter of your wheel. Larger diameter wheels will have higher max speeds, but lower torque. Mid-drives, which use the gearing of your bike, also have max speeds, which are based off whichever gear your bike is in.

Direct Drive

Direct drive motors are as simple as you can get, and they work very well. They are motors that directly drive the wheel, with no clutch used. Generally, direct drive motors have no internal gear reduction. Meaning that the RPM of the wheel is the RPM of the motor. There are motors with internal gearing without clutches, such as Grin Tech’s GMAC motor, which are usually lumped in with direct drive motors when talking about certain topics like regenerative braking. You will almost never find a direct drive motor that has a clutch.

Direct drives don’t have a clutch. The motor is always engaged. This is what enables regenerative breaking, but it also means that you can’t just turn off the motor and pedal - the motor will actively sap your power even if the entire system is off. You can configure certain systems to electronically freewheel, which does use extra power (on the order of a few watts), but this still results in less system losses than if you simply allowed the motor to drain your power.

Direct drives offer regenerative braking. Which allows you to slow down by using the motor as a generator, converting your kinetic energy back into electricity (and ultimately chemical energy as it recharges your battery). Depending on the kind of environment you’ll be riding on, regenerative braking can typically recover (or extend your range by) 3% to 15%. Hillier terrain will allow you to extend your range the most. Additionally, more urban environments (where you’ll be stopping more often) also allows you to recover more energy as you’ll be stopping more often. However, more important than extending your range is that regenerative braking will vastly reduce wear on your physical brakes. Additionally, your motor controller needs to support regen in order for regenerative braking to work. Otherwise there’s really no point installing a direct drive motor.

Direct drives also can be operated at a much higher power level than geared hub or mid-drive. Without having to worry about damaging any internal gearing, or damaging the bicycle’s drivetrain, it’s not rare to see direct drive motors that can output 2 to 4 KW of power. (Which does not apply to geared hub motors that lack a clutch).

Direct drive motors are near-silent. There’s no gearing to cause additional noise. The only noises are road noise, wind noise, and whining of the electronics.

The main downsides to direct drive motors are that they are inefficient for low speed/high torque uses. The 1-1 ratio of the motor to the wheel means that for bicycle wheels, direct drive motors don’t really run at efficient RPMs until you’re going greater than 15 mph or so. This is especially exacerbated for hills, or when pulling cargo, where you’re naturally going to put more load on the motor at lower speeds. Running a direct drive motor under such high-load conditions can lead to overheating. This isn’t as much of an issue for motors with geared hubs that lack clutches, which can handle higher torque loads.

To my knowledge, it’s very hard to find a factory ebike that ships with a direct drive motor. These are generally used in DIY installs, or from high-end boutique manufacturers.

Geared Hub

A geared hub motor is a motor with internal planetary gears that allow the motor to spin at a much faster rate than a traditional direct drive motors. Additionally, nearly all geared hub motors have clutches that allow them to freewheel. Which means you can turn off the electrics and pedal the bike like a very heavy acoustic (non-electric) bike.

Geared hubs are most of the hub motors on the market. Because of the gearing, they provide much more torque than direct drives, and can thus be much smaller than direct drives. They are generally a bit louder than direct drives, but newer ones are coming out that use quieter gear reduction which somewhat mitigates that issue. Generally, if you come across a hub motor kit on the market, it’s using a geared hub motor.

Geared hub motors are usually, but not always, slightly lower power than direct drives. Higher power geared hub motors can have heat dissipation issues. Plus at higher power levels, you’ll want to run a direct drive motor for the extra speed.

Because geared hub motors are so common, they’re typically used in most entry-level ebike conversions. Additionally, a lot of entry-level factory ebikes use geared hub motors.

Mid Drive Motors

In general, Mid drive motors work by driving the rear wheel via the bike’s drivetrain. Modern ebikes do this by essentially mounting the motor in or around the bottom bracket. This places the weight of the motor in a really good position, balance wise. It also reduces the total unsprung weight of the bike, which improves traction. Because mid-drives can take advantage of the bike’s gearing, you can get both high torque, and a decently fast top speed. Meaning they can climb well and perform well on flat ground.

The downsides of mid-drives are that they increase wear on the bike’s drive train. They also can’t be run at super high power levels. Above 2000 watts, there’s a real risk of snapping your bicycle chain. Even at more regular power levels like 500 watts, they still increase wear simply because the average human will put out something like 100 watts continuously. You can’t really do regen on a mid-drive, so you’ll be able to climb hills faster, but you won’t regain any of that energy on the way down. They also tend to be louder because most mid-drives have some kind of internal gearing in addition to using the bike’s gearing. Finally, if your bike uses external gearing (aka a derailleur, most bikes use these) instead of internal gearing, then you need to not run power through the drive while shifting. This is done either by using a specific “gearsensor” to detect when a gear change, which the controller will use to briefly cut power to the motor during that. Alternatively, you can make a habit to cut power when you change gears.

Still, for most people a mid-drive motor is a really good entry to ebiking. Especially if you’re going to get a factory-built ebike. Most factory-built ebikes are specced for the European market, which has a limit of 250 watts on the motor, and a mid-drive really helps when the motor will only output 250 watts of power.

Which motor style is better?

It depends.

First, let’s examine this from a hub motor vs. mid-drive motor. Mid-drives excel at climbing hills and going from a stop. Making them a no-brainer for something like mountain biking. They also work really well for other high-torque applications, like riding through sand or snow.

My first few ebikes used mid-drive motors, and the only thing I really want from hub motors is the ability to use regenerative braking.

Hub motors are excel at riding on flat terrain, or climbing relatively gentle inclines. Additionally, because of the potential for regenerative braking, hub motors are better for downhill and urban environments. Additionally, because they’re separate from the bike’s drivetrain, you get a bit of redundancy if either fails. With mid-drives, you can obviously still pedal if the electrics fail. But if the drivetrain fails, at best you’re left with a really awkward scooter. Thankfully, this isn’t an issue in practice so long as you take care of the drivetrain (regularly clean and lubricate the chain, replacing it as needed, etc.) and don’t run too much power through it.

Again, it depends on your use case. My first few ebikes used mid-drive motors, which allowed me to relatively easily convert these existing bikes without much fuss. My only complaint with these are with the specific kits I used, otherwise I’m quite happy with these.

Solar?

Powering an ebike using solar power is actually doable and sometimes worthwhile. It’s not cost-efficient in terms of being the sole source of electricity. Ebikes are efficient enough, and energy is cheap enough that you will spend maybe $1.00 in electricity for the entire life of the bicycle. However, for long-range applications, it is cheaper to buy and set up solar panels + a charge controller than it is to buy a second (or larger) battery.

The way you use solar with an ebike is to use the panels to charge the ebike battery. It doesn’t make sense to ditch the battery and use the panels to directly run the motor. The power output from the panels is too low and variable to be worth doing.

The most obvious reason to use solar power is for long, off-grid bicycle tours. Solar also works well for other types of off-grid charging. For example, you might ride an ebike to work, and leave it outside to fully charge back up while you work. Which, again, won’t save you anywhere near enough money to be worth tracking, but if you were going to leave the bike outside anyway, then it might as well charge while it’s there. This also saves you time from needing to keep a charger at work, or from having to bring your battery to your desk to charge there.

One idea I’m planning to explore with solar is the idea of putting on a solar roof, which would provide partial shade for me on rides, while also slightly charging the battery. Here the primary reason to do this is to provide shade, with a side-benefit of slightly extending the range of the ebike. I’ll update this once I do so.

My Experience

I currently have 2 ebikes, each of which is powered by a BBS02 mid-drive motor. One of which is on a Yuba Mundo longtail cargo bike, and the other is on a standard hybrid bike. I think that the BBS02 and BBSHD mid-drive motors are excellent kits for creating a really good ebike with minimal fuss. My main issue with these kits is that Bafang (the manufacturer) decided to only use cadence-based pedal-assist for these, not torque-sensing. Additionally, I’d love to use regenerative braking, so I’m actively looking at changing one of my bikes to use a hub-motor system so I can try out regenerative braking.

Links

Legality

I’m a person on the internet. This is not legal advice. This might even be entirely wrong or outdated. Or you might live outside of the US (or in one of the states that bans ebikes). Don’t be an ass, use your brain.

In the US, ebikes are limited to 750 watts when operated in public spaces. Locally, there may be additional limitations or even bans. In private spaces, there’s basically no limits.

A bunch of states are adopting different classes of ebikes, based off what California introduces. These are:

- Class 1: Ebikes limited to 20 mph, pedal-assist only.

- Class 2: Ebikes limited to 20 mph, throttle allowed.

- Class 3: Ebikes limited to 28 mph, pedal-assist only. Can’t ride on “Class 1 bicycle paths”. Must be at least 16 to ride.

You can still pedal an ebike past the given limit, but after 28 mph, a class 3 ebike should cut off the motor.

In practice, ebikes are basically ignored by cops. They likely aren’t educated on these laws, and even if they are, it’s really hard to prove that you were actually doing anything wrong. The takeaway here is to try not to do anything to bring extra attention to you. Which, unfortunately, might not be possible.

Cool, that’s done.

Last updated: 2022-08-14 18:29:20 -0700

Books

I should leave reviews on goodreads, but I don’t.

Some definition on genre:

I vastly prefer to read sci-fi and/or fantasy. Of that, I really enjoy hard sci-fi, but that’s not a requirement.

Here’s a list of books and other readings I enjoy:

Books

Sorted by Author

Andy Weir

- The Martian is a hard sci-fi book about someone left behind on one of the first missions to Mars, and his struggles to get back home.

- Artemis is a heist novel set in the first city on the moon. Like The Martian, it’s also hard sci-fi.

Fletcher DeLancey

My partner turned me on to her. Her Chronicles of Alsea series is pretty great, though at times it reads like the fan fiction it grew out of. They’re still highly entertaining and worth reading.

Scott Meyer

I really enjoy his Magic 2.0 series, though it does have a significant drop-off in quality. The first two books are amazing, the third is pretty good, but not as good as the previous two. But the reviews for the fourth one have kept me from continuing.

Tamora Pierce

When I was 11 or 12, her Circle of Magic books caught my eye at a Barnes and Noble. My parents bought the entire quartet for me. Somewhat recently, I began to re-read these, and remembered everything I enjoyed about them, plus additional things that my older perspective was able to pick up on. This time around, I also read her Circle Opens quartet, which is also good. Highly recommend these feminist books for any fans of fantasy.

Tolkien

I remember reading The Hobbit the summer before fifth grade. The only scene from it that I remember, and even barely, is Bilbo getting the ring. I really ought to re-read it again sometime.

Also around that time, I wanted to read Lord of the Rings. But I just couldn’t get into it. It wasn’t until after I turned 30 that I actually got around to reading the books.

Other Readings

- HFY is a subreddit where people share stories sci-fi/fantasy stories where humans are the badasses. Usually by picking one particular trait of humans and overexagerrating it to give them an advantage over other species.

Last updated: 2022-10-29 09:20:58 -0700

FanFiction

I said I’d link all sorts of crap.

Organized by fandom

Legend of Korra

Almost always Korrasami stuff, because of course.

Smut

Of course I’m going there. NSFW.

Shera and the Princesses of Power

Catradora!

Smut

NSFW.

Greek Mythology

Found some Medusa F/F.

From this reddit thread

- Short story in the thread itself

- Love is Blind: Medusa rescues a blind peasant girl

- Living Art a blind artist is sacrificed to Medusa as a living statue.

- In Another Age: Medusa runs into a blind girl and falls in love

Last updated: 2020-05-16 23:19:11 -0700

HFY

Short for Humanity, Fuck Yeah. These are sci-fi/fantasy stories about how humans are different than other species.

Some of my favorite examples are:

SciFi

- The Deathworlders is one of the original/foundational HFY stories. Humans are still pre-contact, yet there have been some that are illegally kidnapped/in space, and as it turns out, there they only ones to have evolved from a “deathworld” - a high-gravity planet with very competitive life.

- Contact Procedures. Humans are relatively advanced when an FTL gate is installed in their system. They are introduced to a corrupt government, figure out that said government is corrupt, gives them the finger and fights them. It’s one of the best-written stories in the subreddit.

- Intergalactic Challenge Games. Aliens host an Olympics-inspired event, this is the first event with humans, and humans troll the shit out of them. 5 part story.

- On the Shoulders of Giants is a look at human rocket development (as of mid-2018 or so, pre BFR -> Starship/Super Heavy rename) from the perspective of them being museum pieces.

- The Cult of Janus - Humans set up a religion to seed spies and soldiers expecting some galactic war. To keep it’s cover, the religion takes care of the community. Centuries later, another religion tries to start a holy war, and the Church of Janus responds.

- Humanity’s Debt. Doctors, fuck yeah.

Fantasy/Sci-Fi mix

- The Magineer is about a futuristic engineer w/ AI implants who gets dumped in an MMORG setting where magic is, at it’s most basic level, a programming language. Super interesting.

- Finishing The Fight is a halo/neverwinter nights fanfic. It’s written pre-Halo 4, which honestly is a plus in my book.

Last updated: 2021-04-12 12:26:52 -0700

Flying

Notes on Flying and Aviation.

Everything from writeups of notable flights, notes on airports and some of the noise abatement procedures around them, to a bunch of theory and how to actually operate an airplane.

Basically, more information gets added to this subsection as I study for an additional rating or license. Currently, I’m an instrument-rated private pilot. I have no plans to go for a commercial license. I might do a multi-engine rating at some point. I would also like a seaplane rating as well.

Links

Last updated: 2022-11-06 10:49:35 -0800

Aviation Decision Making

ADM, or Aviation Decision Making, are all of the decisions made surrounding flying - from whether or not to even go flying in the first place, to discontinuing flight or completing flight as planned.

This is taken from the PHAK, either verbatim, or adapted.

Steps for good decision-making:

- Identify personal attitudes hazardous to safe flight

- Learning behavior modification techniques

- Learning how to recognize and cope with stress

- Developing risk assessment skills

- Using all resources

- Evaluating the effectiveness of one’s ADM skills.

Risk Management

- Accept no unnecessary risk.

Duh, flying has risk, but maybe don’t fly VFR in low visibility conditions? Or at least, do so with a CFI who has experience in those conditions, from whom you can learn. - Make risk decisions at the appropriate level.

PIC owns all the risk. Don’t let passengers bully you into violating 1, and don’t let ATC do so either. It’s always appropriate to tell ATC “unable” to a command. - Accept risk when benefits outweigh costs.

Don’t stack risks. Don’t fly an unfamiliar plane in MVFR conditions. - Integrate risk management into planning at all levels.

Not just in preflight planning, but at all stages of the flight. Maybe the weather goes to shit en-route. In which case, reconsider whether the increased risk is worth it, or maybe you can go somewhere else - or even just return back to where you came from.

Hazard and Risk

Hazard is a condition, event or circumstance (whether real or perceived) that a pilot encounters. Risk is the pilot’s assessment of the hazard. Note that different pilot’s can come up with different risks for the same hazard.

Hazardous Attitudes

Studies have identified 5 hazardous attitudes that can prevent making sound decisions:

| Attitude | Phrase | Antidote | Notes |

|---|---|---|---|

| Anti-authority | Don’t tell me | Follow the rules, they’re usually right | Aviation regulations are often written in blood, their’s a very good reason to follow them. |

| Impulsivity | Do it quickly | Not so fast. Think first. | Like with everything in modern life, thinking before you act is always the correct thing to do. Actually doing that, though, is much harder. |

| Invulnerability | It won’t happen to me | It could happen to me | Power loss on takeoff is a thing that only happens to other people right? Wrong. It could totally happen, and be prepared in case that does happen |

| Macho | I can do it | Taking chances is foolish | Don’t take unnecessary risks. Don’t do things to prove to yourself/others that you can. You’re already a cool person by being able to fly, you don’t have to prove anything. |

| Resignation | What’s the use? | I’m not helpless. I can make a difference | This is, to me, probably the most deadly of the 5 attitudes. Getting into an emergency situation via the other 4 is bad, but then deciding that there’s nothing you can do - especially when there often is something you can do - is what will kill you. Less dramatically, letting someone bully you into going along with unreasonable requests can also kill you. You are PIC, you are in charge. Act like it. |

Risk Assessment Matrix

Also copied, more or less, is a matrix on deciding how bad a particular risk is. With likelihood on one axis, and severity on the other.

Likelihood is expected chance that event will occur:

- Probable: Will occur several times

- Occasional: Will probably occur sometime (expected at least once)

- Remote: Unexpected to occur, but possible

- Improbably: Very unlikely to occur.

Severity is expected consequences of the event happening:

- Catastrophic: Loss of life or property

- Critical: Severe injury/major damage (expensive to repair, insurance might declare plane totaled)

- Marginal: Minor injury/minor damage (only a few AMUs of damage)

- Negligible: Less than minor injury/damage

| Severity | ||||

|---|---|---|---|---|

| Likelihood | Catastrophic | Critical | Marginal | Negligible |

| Probable | High | High | Serious | Medium |

| Occassional | High | Serious | Medium | Low |

| Remote | Serious | Medium | Medium | Low |

| Improbable | Medium | Medium | Medium | Low |

Mitigating Risk

The way to mitigate risk is one of either:

- Cancel the flight

- Delay the flight

- Bring someone more experienced who can help you address the risk

Roughly in order of likelihood.

One suggested way to eliminate the “must go home” pressure is to always bring an overnight kit with you, so that if you do get stuck somewhere you’re at least fine for the night.

Remember, the general rule for choosing to fly GA to a place is:

Time to spare, go by air.

Last updated: 2019-09-11 14:16:51 -0700

AeroMedical

Medical Concerns with flying.

Hypoxia

Hypoxia is an insufficient supply of oxygen to the cells. Hypoxia is incredibly dangerous as it causes the brain and other vital organs to become impaired. Additionally, the symptoms of hypoxia vary based on the person, and can be hard to detect. I personally have felt ill and lightheaded as a result of hypoxia, but the next time I experience hypoxia might have different symptoms.

There are 4 types of hypoxia noted in the PHAK:

- Hypoxic hypoxia: Too little oxygen as a whole for the body. It’s a concern as you increase in altitude, there’s less air overall, which can result in too little oxygen.

- Hypemic Hypoxia: There’s sufficient oxygen in the air, but not enough in the blood. Most commonly caused by CO poisoning. Can also be caused by recent (within the past several weeks) blood donation and exacerbated by flying at altitude.

- Stagnant Hypoxia: The blood can take up the oxygen, but it is unable to be transported to the organs. Can be caused by heart problems, excessive acceleration, a constricted blood vessel, or cold temperatures.

- Histotoxic Hypoxia: The oxygen is able to be transported via the blood to the organs, but the organs are unable to use it. Usually caused by alcohol or drugs. “drinking one ounce of alcohol can equate to an additional 2,000 feet of physiological altitude” - PHAK.

Hyperventilation

Excessive rate and depth of respiration. Causes an excessive amount of CO2 to be removed from the body. Similar symptoms to hypoxia. Breathing into a paper bag or talking aloud helps recovery.

Last updated: 2021-05-29 09:20:33 -0700

ATC

Air Traffic Control. The system of people and equipment designed to help keep you safe in the air.

Per FAR 91.125, light gun signals that ATC can send you in the event of lost comms are:

| Color/Type | On Ground | In Flight |

|---|---|---|

| Green, Steady | Cleared for takeoff | Cleared to land |

| Green, Flashing | Cleared to taxi | Return for landing |

| Red, Steady | Stop | Give way to other aircraft and continue circling |

| Red, Flashing | Taxi clear of runway in use | Airport unsafe - do not land |

| White, Flashing | Return to starting point on airport | N/A |

| Red & Green, alternating | Exercise Extreme Caution | Exercise Extreme Caution |

Last updated: 2021-11-11 17:50:50 -0800

Big Bear City Airport, L35

Information for flying in to LA’s local mountainous airport.

Noise Abatement

(Not required, but please follow these)

Available on whispertrack.

Curfew: 10 pm to 7 am local time.

Recommends minimizing “unnecessary engine idling and runups”, especially at night.

Procedures

- Preferred Runway: 26

- Pattern Altitude: 8000

- Intersection Takeoffs not recommended

Last updated: 2019-11-04 16:42:24 -0800

Buying a plane

There’s all sorts of guides from people on how to do this. Here’s my process.

Deciding on a kind of plane to buy

- Figure out your “mission” (what will encompass the 90% of flights you plan to do?)

- For me, most of my planned flights are cross country flights. In particular, I have a desire to make a 410 nm trip as much as I can (as opposed to flying commercially, which will still be a portion of the trips). I’d prefer this trip to take less than 3 hours each time I do it. Putting that in numbers, I want something with at least 500 nm range (not including reserves) that can fly at least 140 kts true.

- For multiple reasons, I want something equipped for IFR.

- Nice to haves:

- Control stick. I just prefer it to a yoke.

- Glass panel.

- Low wing. They’re just cooler.

- Faster is better, of course.

- Unnecessary:

- I don’t need to carry passengers. There’s no need for me to own something that has 4 seats. I’ll be perfectly fine with a 2 seater. I could also be fine with a single seater, but that makes getting a checkout much more annoying.

- Off airport operations, while dope, are not for me.

So, with that in mind, I essentially am skipping all the trainers, because they simply don’t have all the range (nor are they fast enough to make this flight in less than 3 and a half hours).

With all this, I’m probably going to either get something experimental, a Grumman Tiger, or a Mooney.

Deciding on which particular plane to buy

My questions, on how to go from staring at listings on barnstormers to owning a plane.

- How do I decide whether to reach out to the seller?

- How heavily should I favor more local planes to less local?

- Very.

- How do I find an A&P to do the prebuy?

- Like, how do I even find one?

- How do I make sure they’re trustworthy/do a good job?

- How much will a prebuy run me? I expect around 3-5k, probably.

- From what I’ve read, a prebuy essentially turns in to an annual.

- How do I find a CFI to give me a checkout in the plane?

- If I don’t have the cash up front, how do I get a loan for it?

- Yeah, in this economy, I’d much prefer to have as a few outstanding loans as possible.

- Anything else I should know on the buying front?

- Once I actually buy it, how do I find a place to store it?

- Is getting a hangar worth it in SMO? (Probably not, tie down is fine, though)

- How do I get insurance/who should I get insurance from?

- AOPA’s recommendation.

So much I don’t know.

Last updated: 2020-04-21 10:59:32 -0700

Checklists

Checklists for airplanes I fly.

Last updated: 2019-06-22 22:38:48 -0700

Emergency

- [] Airspeed - best glide

- [] Best Field - keep looking for a better place to land.

- [] Checklist

- [] Declare

- [] Squawk 7700

- [] Mayday (121.5 or current freq)

- [] Engine - Shutdown

- [] Flaps - As required

- [] Get Ready (for crash)

- [] Seatbelts - Tighten

- [] Sunglasses, headset - Remove

- [] Passenger - Secure

- [] Master switch - Off

Last updated: 2019-07-06 11:39:54 -0700

Preflight checklists

Mnemonics and other things to consider, often before you even get to the airport

IMSAFE

Mnemonic to go over before you even go to the airport. Covers how you are doing.

- Illness: Are you sick or feel like you’re becoming sick.

- Medication: Taking anything not allowed, or otherwise out of the ordinary?

- Stress: Are you stressed/worried about other things? Want to be in a good headspace.

- Alcohol: >8 hours bottle to throttle in the US.

- Fatigue: Had enough sleep? Had enough to eat?

- Emotion: How are you feeling? Are you mentally well enough to fly?

For me, “am I able to make the bike ride to SMO?” is usually enough to answer all of these.

PAVE

Helps you perceive hazards and assess risks.

- P: Pilot

Am I ready for this flight? - in terms of experience, recency, currency, physical and emotional connections. See IMSAFE for answers to the latter 2. - A: Aircraft

- Is this the right aircraft for the flight?

- Am I familiar with and current in the aircraft?

- Is this aircraft properly equipped for the flight? (Instruments, lights, navigation and communication equipment)

- Can this aircraft use the runways available for the trip w/ margin for safety & weather?

- Can this aircraft carry the planned load?

- Can it operate at the intended altitudes?

- Does it have sufficient fuel capacity for each leg?

- Does it actually have the necessary fuel in it?

- V: Environment

- Weather

- Terrain

- Airports

- Airspace

- Nighttime

- E: External Pressures

Other things influencing the flight.- Someone waiting at the airport for the arrival of the flight

- passenger the pilot doesn’t want to disappoint (See: hazardous attitudes)

- desire to impress someone (see: hazardous attitudes)

- desire to demonstrate pilot qualifications (see: hazardous attitudes)

- desire to satisfy a personal goal (“get-there-itis”)

- the pilot’s general goal-completion orientation

- Emotional pressure/pride that you might not be as good as you thought you were.

Last updated: 2019-09-11 09:21:09 -0700

Composite Homebuilt Aircraft

Some of the more interesting and efficient designs in the general aviation space.

- Marc Zeitlin is one of the most knowledgeable persons on composite aircraft.

- Rutan Factory Aircraft Designs on CD

- Cyanoacry’s imgur thread on getting his damaged verieze repaired

Last updated: 2023-09-27 19:21:50 -0700

Using an E6B Mechanical Flight Computer

Literally something you only need to do during primary training, to prove you CAN use an e6b. Sigh.

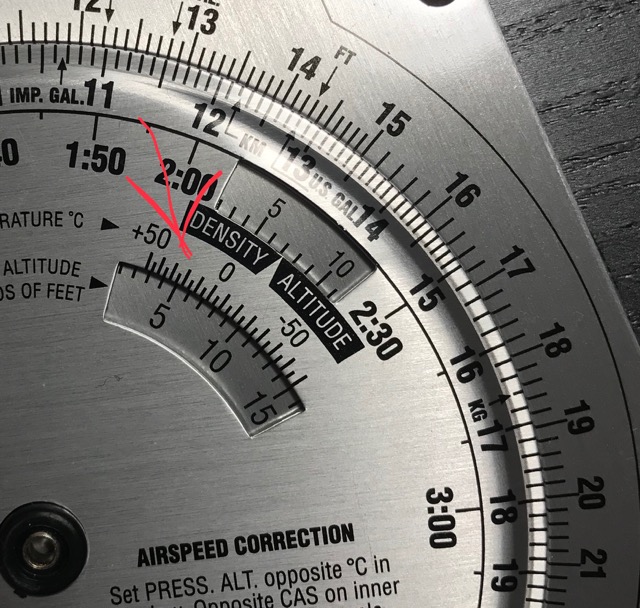

Density Altitude

For engine performance!

As you know, density altitude is pressure altitude adjusted for non-standard temperature. Standard temperature is 59° F, or 15° C at sea level.

Pressure altitude is true altitude adjusted for non-standard pressure. Standard pressure is 29.92 in. hg.

Pressure altitude is a really simple formula: pressure_altitude = (standard_pressure - pressure_setting) * 1000 + true_altitude

And while we can easily correct for non-standard temperature (the formula is: density_altitude = pressure_altitude + (120 * (outside_air_temperature - isa_standard_temperature))), it’s not as simple to do it manually, and an e6b is easy enough to use here.

Estimating isa standard temperature is ((true_altitude / 500) - 15) * -1

In the image below, the pressure altitude is 4500 feet, the true altitude is 4500 feet, and the outside air temperature is estimated to be 28° C. For the sake of the calculation, we bump that to 30° C. As you can see, the e6b then tells us that the density altitude is just over 7000 feet. Which we can double-check after a bit enough:

pressure_altitude = 4500 + (120 * (28 - (((4500 / 1000) - 15) * -1)))

= 4500 + (120 * (28 - ((9 - 15) * -1)))

= 4500 + (120 * (28 - (-6 * -1)))

= 4500 + (120 * (28 - 6))

= 4500 + (120 * 22)

= 4500 + 2640

= 7140

Which is about what the e6b tells us it us.

True Airspeed

True airspeed is effectively calibrated airspeed corrected for the density altitude.

So, in this case let’s re-use the earlier density altitude of ~7100 feet.

Calibrated airspeed then corresponds to the inner ring, and true airspeed the outer ring. That is, if your calibrated airspeed is 150 kts, then the true airspeed is ~167 kts.

Remember to correct for decimal placement.

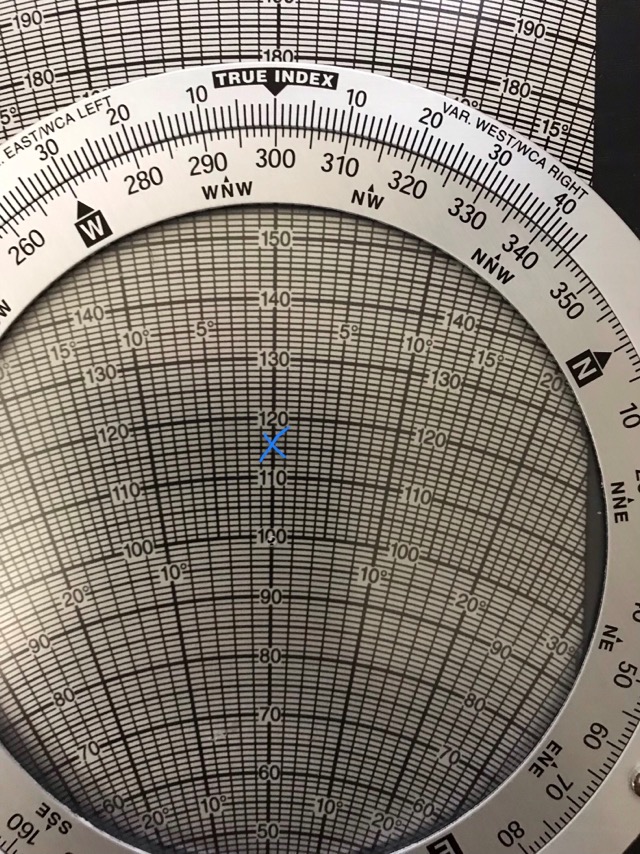

Ground Speed

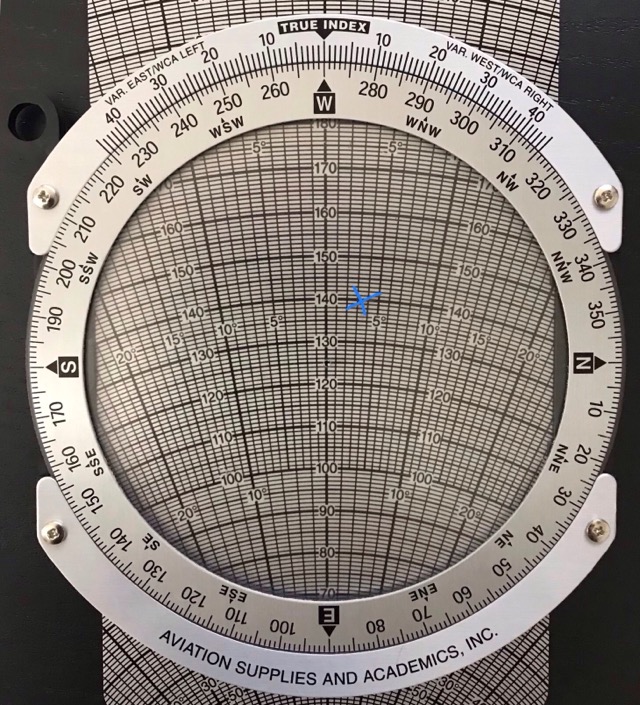

For a given true airspeed and heading, you can easily calculate your ground speed.

For this example, let’s say you are heading 270 at 125 knots, with the wind at 300 at 15 knots.

Set the center “dot” on the rear of the circle to some value - I pick 100 because it’s a nice round number. Set the circle (true index) to the wind direction (so… 300). Now, mark (IN PENCIL) the wind speed relative to the value you chose (place a mark where it says 115). Like so:

Now rotate so that the corrected heading is under the true index, and slide up so that the true airspeed is under the center dot. Like so:

Now we can read the ground speed (in this case, ~139 knots), and wind correction angle (~3 degrees, to the right - or fly heading 273).

When done, wipe the pencil lead off.

Time to travel distance

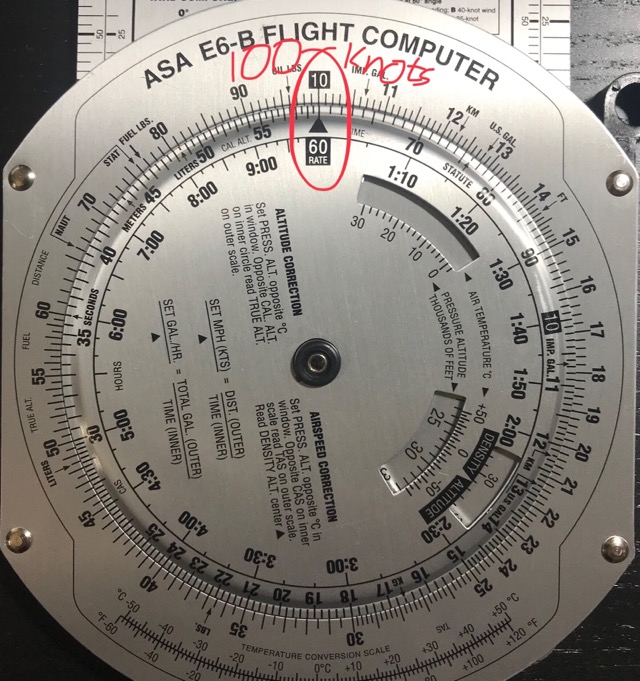

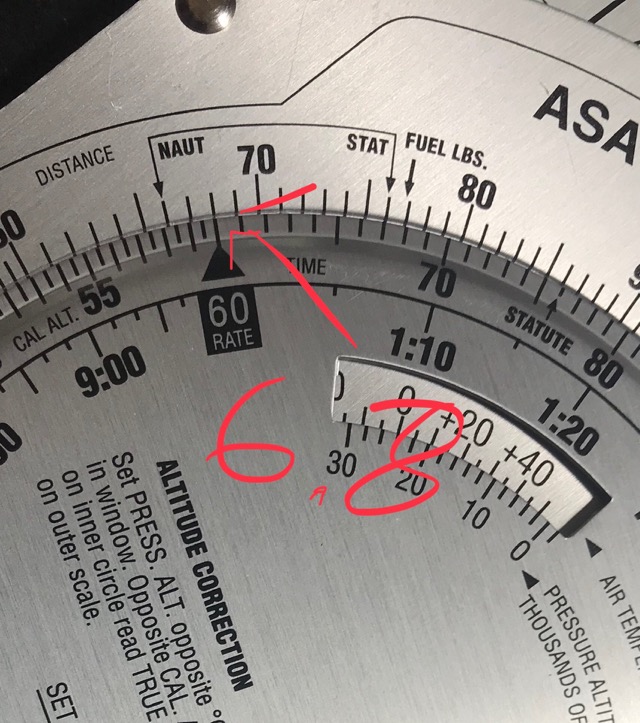

Now that we have a ground speed for this leg, we can calculate the time it takes to travel a given distance.

For this example, let’s say we have a ground speed of 100 kts, and the leg is going to be 13 nm.

Before we use the e6b, let’s do some quick mental math to estimate an acceptable range: 13 nm / 100 kts is just over 1/10 hour. Converting to minutes, it’s just over 6 minutes, but only just - this leg should take between 7 and 9 minutes.

First thing we do to calculate this on an e6b is to align the “60 RATE” box on the inner circle with the approximate speed you’re going. In this case, it should be aligned with the “10” at the top.

Next, we count the distance from the “10” marker - 13 ticks from the marker, and compare that with the corresponding value on the “time” scale. This gives us our estimated time. In this case, it’s just under 8 minutes. As expected.

Of course, since we have the time (and a calculator handy) to do this, the actual estimated time is:

distance = speed * time

time = distance / speed

time = 13 nm / 100 kts

time = 0.13 hrs

time = 7.8 minutes

As expected.

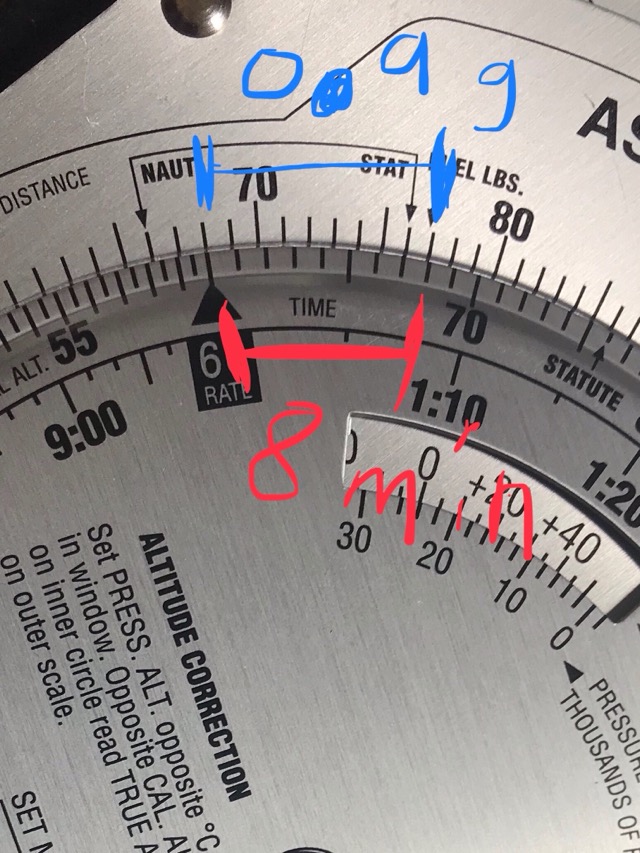

Fuel usage

Now that we have time to travel a given distance, we can use the known rate of fuel consumption to calculate fuel usage.

For this, let’s assume fuel usage rate of 6.8 gph at cruise - a somewhat efficient Cessna. I’ll also use a time from the previous calculation.

We’ll mentally move the decimal point to the right one, to place the “rate” indicator at 68 on the calculator.

Now, on that same minutes time scale we used earlier, we count 8 minutes - or the amount of time we plan to travel that leg.

The gph usage then corresponds to the other side of that 8 minute marker - in this case, 9 ticks above the 68 tick. Keep in mind to move the decimal point back to the left, so that we use 0.9 gallons as our expect fuel usage.

Note that we could go the other way - if we had 10 gallons available, then we could move 100 ticks clockwise to get the number of minutes we can travel at that rate. (In this case, just under 2.5 hours). You can also compare to the inner “time scale” to get the value in hours instead of minutes.

Last updated: 2019-07-29 22:19:01 -0700

Flight Systems

Gyroscopic Systems

Instruments: heading indicator, attitude indicator, turn indicator.

Works off two principles: Rigidity in space and precession.

Rigidity in space means using angular momentum to maintain internal orientation regardless of what the aircraft does.

Heading indicator & attitude indicator both rely on the rigidity in space principle.

Heading indicator only measures change in heading, and steam gauge ones don’t correct for the earth’s rotation (meaning that it’ll precess noticeably over time as the earth rotates under the gyroscope). Must be calibrated against the magnetic compass in straight & level flight (or on the ground).

Attitude shows bank & pitch information. Should show correct attitude within 5 minutes of starting engine. Some may have a tumble limit (gimble lock). May have small acceleration errors (accelerate: slight pitch up, decelerate: pitch down) and roll-out errors (following a 180° turn, it’ll show a slight turn to the opposite direction).

Turn indicators work on precession. Turn coordinator show rate of turn & rate of roll. Turn & slip indicators show rate of turn only.

Pitot-Static System

Instruments: Altimeter, Vertical Speed Indicator (VSI), Airspeed Indicator.

Switching to an alternate static port (which is usually located in the cabin, so that it doesn’t freeze over) will cause a momentary increase of all 3 of these instruments. This is because, in an unpressurized cabin, there’s a lower air pressure than there is outside.

Altimeter

Aneroid barometer (barometer that does not use a liquid) that shows height above a given pressure level. Basically, there’s a stack of sealed metal (aneroid) wafers that expand/contract based on the atmosphere as given by the static port. These wafers are mechanically linked between to the steam gauge which displays this. “Sensitive” altimeters (most aircraft altimeters) have an altimeter setting knob that essentially moves the dial to correct for the current altimeter setting.

Uses just the static port.

Standard lapse rate: 1000 feet per inch of mercury. (which means that increasing the altimeter setting by 0.01 (from 29.92 to 29.93) increases the indicated altitude by 10 feet. Similarly, decreasing will reduce the indicated altitude by 10 feet.)

In the US, when operating below 18,000 MSL, regularly set the altimeter to a station within 100 nautical miles. Above 18,000 MSL, set it to 29.92 inches of mercury. This is to allow for better aircraft separation.

“High to low - watch out below!” - Going from a high pressure area to a low pressure area will cause your altimeter to indicate a higher-than-actual altitude.

Same error when flying from warm air to cool air.

Types of Altitudes

| Name | Description |

|---|---|

| Indicated | Uncorrected altitude indicated on the dial when set to local pressure setting |

| Pressure | Attitude above the 29.92 inches of mercury datum plane. This is the setting used when flying above 18,000 MSL. |

| Density | Pressure altitude corrected for nonstandard temperature. Used for performance calculations. “The altitude the airplane behaves like it’s at” |

| True | Actual altitude above mean sea level (MSL) |

| Absolute | Height above ground |

Vertical Speed Indicator

Basically, but-not-actually, uses R=D/T to give you rate of climb. Also gives rate trend. There is a noticeable lag in normal VSIs. Instantaneous VSIs (IVSI) interpolate standard VSI data with accelerometers to remove most of the lag.

Uses just the static port.

Works via a diaphragm inside the instrument connected directly to the static source. Outside of that is a an area that receives the same static pressure, but at a much lower rate than inside the diaphragm (this is where the lag comes from). As diaphragm expands/contracts, a mechanical linkage (in a steam gauge) moves the pointer needle to display rate of climb.

Airspeed Indicator

Uses both static port and ram air. Essentially, indicated airspeed = ram pressure - static pressure.

A diaphragm in the instrument receives ram pressure from the pitot tube. The area outside the diaphragm is sealed and connected to the static port. A mechanical linkage converts the expansion and contraction of the diaphragm to airspeed shown on the display dial.

Types of Airspeeds

| Name | Description |

|---|---|

| Indicated airspeed (IAS) | As indicated by the airspeed indicator |

| Calibrated airspeed (CAS) | IAS corrected for instrument & position errors |

| Equivalent airspeed (EAS) | CAS corrected for compressibility error |

| True airspeed (TAS) | EAS correct for nonstandard temperature and pressure. Actual speed through the air. Used for performance calculations |

| Mach number | Ratio of TAS to the local speed of sound |

| Ground speed | TAS corrected for wind. Actual speed over the ground |

Errors

Static Port blocked

E.g. when it’s frozen over.

- Airspeed Indicator will only indicate correctly at the altitude the blockage occurred at.

- If you’re at a higher altitude, airspeed will be lower than actual.

- Lower altitude -> higher-than-actual airspeed

- Altimeter freezes at thee blockage altitude.

- VSI drops to zero.

- After verifying a blockage in the static port, you should use an alternate static source or break the VSI window (in which case, expect reverse VSI information).

Pitot Tube blocked

Only affects the airspeed indicator.

- Ram air inlet clogged, but drain hole open: Airspeed drops to zero.

- Both clogged: Airspeed indicator will act as an altimeter (higher altitude corresponds to higher indicated speed)

- Turn on the pitot heat to melt ice that may be the cause of the blockage.

Last updated: 2021-11-27 22:07:29 -0800

Flight Writeups

Writeups for flights I thought were significant. Sometimes with ATC logs and other tracks.

Last updated: 2019-08-06 10:59:21 -0700

2019-06-21

This flight was super fun. The plan was to take off from SMO, fly out out to Malibu, do some stalls along the way, and then do ground reference maneuvers over and around Point Dume. Afterwards, the plan was to head back do a little bit of pattern work around SMO.

Here’s the flight track for the entire flight

Stalls

Shortly after takeoff, we went along the coast and did first some power off stalls, and then some power on stalls. My instructor and I briefly went over recovering from a stall (lower the angle of attack by pitching down and applying full power), before doing some clearing turns and entering those stalls. With the exception of the first (in which my instructor had a slip of the tongue and told me to reduce power - should’ve been reduce AoA - and I listened to him instead of saying “nah, you got it wrong”), these were all fairly decent. I still need to work my recovery - I pitch down too much - but he was really happy with them.

Additionally, in one of the power-off stalls, instead of step-by-step going from 30° flaps to 20° flags, then 10° and finally no flaps, I went cleanly to from 30° to no flaps, because I was distracted during that stall. Obviously, this is something I need to improve upon. Yay flight sims.

Ground Reference Maneuvers

I did really well on these. Even if you don’t take into account the fact that this is my first time doing GRM in a low-wing airplane.

Also, the wind wasn’t blowing all that much, which really helped with this.

Turns Around A Point

For this, we reduced our altitude to ~1200 AGL and tried to circle Point Dume. The first few attempts, my altitude control was way off - instead of maintaining altitude ± 50 ft, I varied by as much as 100 ft - I came quite close to breaking the at least 1000 ft above congested areas regulation. Additionally, I felt that I wasn’t able to correctly maintaining the desired radius for the circle - from my perspective, it felt more like an oval than a circle. However, after 2 or 3 tries at this, I finally set up an approach I liked, and while I still didn’t quite like the approach, the GPS track as recorded by foreflight shows almost a perfect circle around Point Dume. We made another circle around Point Dume before we went on to some S-Turns.

Obviously the low wing makes GRM harder - especially turns around a point, but another thing was the differing height of the surrounding terrain that probably also messed with my reference points. Either case, I should practice these in a flight sim.

S-Turns

We did S-Turns along Zuma Beach.

I nailed these. The first turn wasn’t my best - I wasn’t setting up to be perpendicular to the beach when we were crossing it - but after that, I was on the money with these. Again, rustiness that quickly went away.

These also are much easier to do on a low wing airplane than turns around a point are.

Return to SMO

We had planned to do some more stalls on the way back, but there was quite a bit of other traffic around us, so we elected not to. Instead, we went directly to SMO.

For this, I’m going to link to this recording from live ATC. For reference, the recording starts at 4:37:50 PM PDT (23:37:50 UTC). Hereafter, I’m only going to refer to timestamps on the recording (which are directly in the mp3 file as mp3 chapters.

For context, there were at least 3 aircraft returning to land from maneuvers at approximately the same time. Plus another in the pattern, in addition to other kinds of traffic. ATC was busy. My radio work isn’t perfect - as you can hear, I repeat more of the instructions than I absolutely need to, I talk more-or-less in complete sentences - more than I need to, and I didn’t repeat the runway number when we were given clearance to land.

Obviously, with the amount of traffic involved, we didn’t do pattern work. Though we did go around, that was more due to a mistake on both our and ATC’s end (ATC gave the instruction to turn base when we were way too high and way too close to the field to safely make the landing - we should have immediately responded with “unable” and continued with our downwind) - See timestamp 04:20. Because we were too high, the instructor took controls for the first and only time in the flight. He put the plane in full flaps, and attempted to slip it to the field. However, he quickly realized that the plane was way too high to make it, and we went around. He hands it back to me at approximately 05:40.

Another thing of note, after the downwind, we’re asked to make a right 360 for spacing. On the foreflight track, it turned out that I made an almost perfect circle, around an intersection.

The rest of the flight is uneventful, with a few funny moments on the comms.

Conclusion

Overall, I’m quite happy with the flight, and I’m very proud with how it turned out. Things to work on, though are:

- Stalls: Get better at recovering, practice lowering flaps when you only have an electronic indicator of where the flaps are, not a mechanical.

- Turns around a point: Practice them in a flight sim, especially in a low-wing airplane. I was sitting on the wing - unless I’m at a 45° bank, I won’t see the actual point I’m supposed to be orbiting. Recognize that and where you should expect the point just leading the edge to precess as you orbit.

- S-Turns: Practice them, be perpendicular to the line as you are crossing it. That is also the only instance you should be at 0° bank.

- Pattern Work: Actually, I’m pretty decent at it. Still need to practice landings, though.

- Radio Work: I’m pretty good at radio work too. Could be more terse, but I’m very happy with my radio work.

Last updated: 2021-11-11 18:46:23 -0800

2019-06-28 Pre-Solo Stage Check

This was a little bit of a wake-up call to me. As embarrassing it is to admit, I have 70 hours and still haven’t soloed. But, finally the stars are aligning correctly, and I’m ready to solo (I’m actually about ready for a checkride - my flight instruction has been weird). Only thing remaining was a check with another instructor to make sure I’m not a danger to myself and others.

I don’t remember the entire details of the flight (didn’t get around to writing this up until a few days after the flight), but these are what stand out to me:

- When I transitioned to the sportcruiser, I made sure to redevelop a sight-picture needed to land safely, but I didn’t redevelop the sight-picture for other phases of flight - Vy, Vx, etc. Which led me to constantly readjust my pitch as a hunt for the correct airspeed.

- For airplanes with control sticks, it’s much easier to control the plane when your hand is not at the top of the stick. Despite being where the trim & PTT buttons are, keep your hand lower on the stick except when using the radio or trimming the plane.

- Apply trim when changing phases of flight.

- Apparently, the Rotax 912 ULS likes to cruise at 5200 RPM, for cooling reasons.

- When approaching a target altitude, lower the nose first, then reduce power.

- For stalls, recovering is not so much as “push down”, as “let off the pressure”. Obviously, this assumes that you were trimmed for cruise flight, not for a stall.

- My emergency procedures need work, massively.

- This instructor made it painfully clear - to the point where I had trouble sleeping - that I needed to work on my emergency procedures. I feel this reflects negatively on the instructor, as there are ways to get this point across without causing me to lose sleep or otherwise feel like I’m a terrible pilot.

- On my next flight with my regular instructor, this was all we did. 1.5 hobbs doing engine-out work. I now feel much better about this.

Overall, I did pass that stage check, but, obviously, I felt pretty terrible coming out of it.

Last updated: 2019-07-06 11:39:54 -0700

2019-07-26 First Solo

This has been a long time coming. According to my logbook, I have 73 logged pre-solo hours. That’s almost absurdly high. But, I did it. So… whatever.

ATC Logs here, which starts at 0122:28Z on 2019-07-27.

Pre-Takeoff

Before I soloed, my instructor and I did a couple laps in the pattern. Then we came back to the FBO, he got out, and I was going to go do at least 3 laps in the pattern.

I started off by instilling great confidence in him by starting the start engine checklist from the wrong point. Essentially, I skipped turning on the electrical system and went straight towards turning on the engine. I realized my error when the engine didn’t start after I turned the key to engage the starter. I did get it started after I had followed the checklist from the right starting point1. Once I got it started, copied the weather, etc. I advised ground that I was a student solo pilot and was ready to taxi. At the runup area, I snapped a photo of the empty right seat, before proceeding to do the runup. Something I noticed is that I needed to have the canopy partially open to visually verify that the rudder pedals controlled the rudder as expected - normally we have the canopy partially open anyway for cooling reasons. I left it closed because with only one human in there, it didn’t heat up nearly as quickly. There was no issues with the rest of the runup, informed ground that I was done and was allowed to taxi to the runway.

First Lap